Products

In an ever-changing world, Maerifa Solutions brings together experienced people, state-of-the-art technology, financial acumen and services, to deliver a consultancy-led business-first approach, enabling customers to extract the maximum value from their data for competitive advantage

AI Factory Infrastructure

The era of the AI Factory is here. Maerifa Solutions, working with the industry's largest global OEMs and in direct partnership with Supermicro leading on NVIDIA roadmap and Lenovo leading on innovative sustainable liquid cooling solutions, provides the high-density building blocks required to turn massive data into actionable intelligence.

Lenovo

Lenovo’s AI Factory portfolio unifies HGX AI Factory, GB200 AI Factory, and Neptune Liquid Cooling into a sustainability‑first platform engineered for large‑scale AI.

Designed to meet the thermal, density, and reliability demands of modern GPU clusters, Lenovo’s liquid‑cooled server technology delivers higher performance per rack, reduced energy consumption, and predictable scaling from pilot deployments to full production AI factories.

By combining NVIDIA‑accelerated compute with Lenovo’s global supply chain and proven enterprise service model, organisations gain an infrastructure foundation that is efficient, resilient, and ready for next‑generation LLM training, inference, and edge‑to‑core AI operations.

Lenovo HGX AI Servers

Lenovo Thinksystem SC777 (NVIDIA GB200)

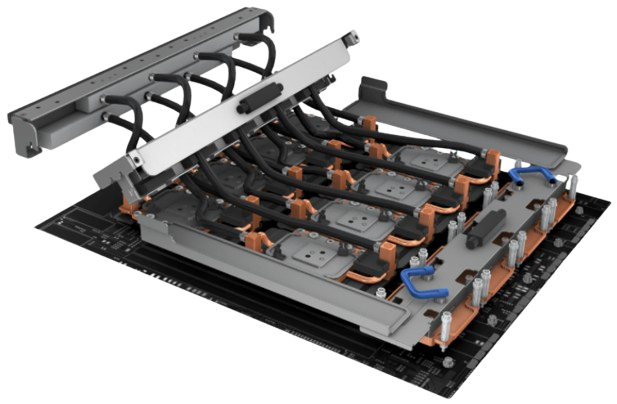

Lenovo Neptune® Liquid Cooling

Supermicro

Supermicro’s AI Factory solutions combine HGX AI Factory, GB200 AI Factory, and advanced liquid‑cooling architectures to deliver extreme density, rapid deployment, and uncompromising performance for modern AI workloads. With a modular design philosophy and one of the industry’s fastest engineering‑to‑rack delivery cycles, Supermicro enables enterprises to scale from single nodes to full rack‑scale GPU clusters with remarkable efficiency.

Supermicro’s NVIDIA‑optimized platforms maximize throughput for LLM training, inference, and multimodal AI, while flexible cooling options—air, direct‑to‑chip liquid, or full immersion—ensure sustained performance at scale. The result is an AI infrastructure foundation that is agile, power‑efficient, and engineered for organizations that need to move fast without sacrificing reliability.

Supermicro HGX Rack Scale B200/B300 AI Factory

Supermicro MGX Rack Scale GB200/GB300 NVL72 AI Factory

Supermicro DLC-2 Liquid Cooling

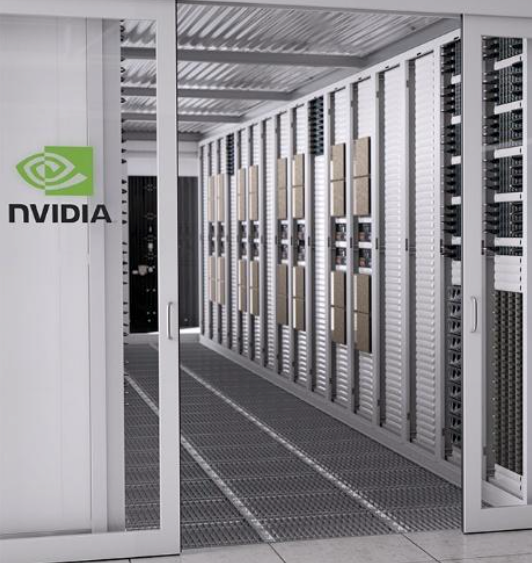

NVIDIA

NVIDIA provides the intelligence layer of the modern AI Factory with a portfolio spanning AI Enterprise Suite, Spectrum‑X, and Quantum InfiniBand, forming an end‑to‑end acceleration stack unmatched in performance and ecosystem maturity.

NVIDIA AI Enterprise delivers a secure, optimised software foundation for building, deploying, and managing generative AI at scale, while Spectrum‑X introduces a purpose‑built Ethernet fabric engineered for predictable, lossless throughput across large GPU clusters.

Quantum InfiniBand extends these capabilities with ultra‑low‑latency, high‑bandwidth interconnects that unlock the full performance of NVIDIA HGX systems. Together, these technologies accelerate every stage of the AI lifecycle—from data preparation to frontier‑scale model training—enabling enterprises to innovate faster, operate more efficiently, and scale with confidence.

NVIDIA AI Enterprise

NVIDIA Spectrum-X

NVIDIA Quantum Infiniband

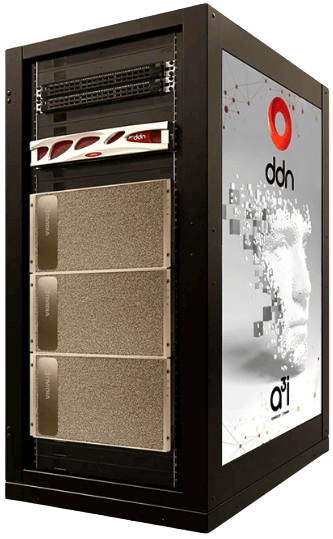

DDN

DDN delivers a next‑generation data platform engineered specifically for the performance, scale, and reliability demands of modern AI. As one of the industry’s most trusted leaders in accelerated storage, DDN provides the high‑throughput, low‑latency data pipelines required to keep GPU clusters fully utilised; ensuring that even the largest training workloads run at peak efficiency.

DDN’s AI‑optimised architecture is built to eliminate bottlenecks across the entire data lifecycle. Whether feeding multi‑petabyte training datasets into NVIDIA HGX systems, supporting real‑time inference pipelines, or powering high‑performance analytics, DDN solutions deliver predictable performance at any scale. With seamless integration into enterprise and hyperscale environments, DDN enables organisations to simplify operations, accelerate time‑to‑insight, and unlock the full value of their data.

DDN A3I

DDN EXAScalar

VAST Data

VAST Data delivers a radically modern approach to AI‑ready storage, built on a unified, hyperscale data platform that eliminates the traditional trade‑offs between performance, capacity, and simplicity. Designed for the era of large‑scale AI and data‑driven computing, VAST’s architecture provides a single, global namespace capable of supporting exabyte‑scale datasets with uncompromising speed and efficiency.

At the heart of the VAST Data Platform is a disaggregated, shared‑everything architecture that combines high‑performance NVMe flash, intelligent data reduction, and a global metadata engine. This enables organisations to feed GPU clusters at line‑rate, accelerate model training cycles, and simplify data management across the entire AI lifecycle—from ingest and preprocessing to training, inference, and long‑term retention.

VAST’s platform is engineered for enterprises building AI factories, HPC environments, and large‑scale analytics pipelines. With consistent performance at any scale, built‑in data protection, and seamless integration with NVIDIA and Supermicro ecosystems, VAST empowers organisations to deploy AI infrastructure that grows effortlessly with their ambitions.

VAST Data Platform

VAST DASE Architecture

WEKA

WEKA provides a high‑performance, software‑defined data platform purpose‑built for the extreme demands of AI, HPC, and data‑intensive enterprise workloads. Its next‑generation architecture is designed to eliminate I/O bottlenecks and maximise GPU utilisation, ensuring that even the largest and most complex AI pipelines run at full speed.

The WEKA Data Platform delivers a unified, POSIX‑compliant file system that spans NVMe flash, object storage, and cloud environments, enabling organisations to build a single, high‑performance data layer across their entire AI estate. With advanced data tiering, snapshotting, and multi‑protocol support, WEKA simplifies data movement, accelerates training and inference, and provides the operational agility required for modern AI factories.

The WEKA Data Platform delivers a unified, POSIX‑compliant file system that spans NVMe flash, object storage, and cloud environments, enabling organisations to build a single, high‑performance data layer across their entire AI estate. With advanced data tiering, snapshotting, and multi‑protocol support, WEKA simplifies data movement, accelerates training and inference, and provides the operational agility required for modern AI factories.

WEKA’s ability to deliver consistent, ultra‑low‑latency performance at scale makes it a preferred choice for enterprises training frontier‑scale models, running GPU‑dense clusters, or operating hybrid cloud AI environments. Its cloud‑native design ensures that organisations can scale seamlessly—from on‑premises supercomputing environments to multi‑cloud deployments—without compromising performance or control.

WEKA NeuralMesh™

WEKApod™